Understanding Deep Knowledge Function: AI’s Reasoning Engine

Deep Knowledge Deep Knowledge FunctionIntroducing the Concept of Deep Knowledge Function

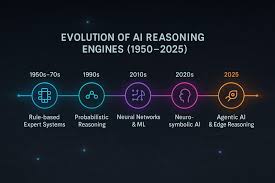

In the rapidly evolving landscape of artificial intelligence, a new paradigm is emerging that moves beyond simple pattern recognition towards genuine cognitive reasoning: the Deep Knowledge Function. This concept represents a class of advanced AI systems designed not merely to process data, but to understand, reason, and apply knowledge in a manner that mimics deep human expertise. The Deep Knowledge Function integrates the powerful representation learning of deep neural networks with structured, symbolic reasoning, enabling machines to grapple with complex, multi-step problems that require a synthesis of facts, rules, and context. It is the cornerstone for developing AI that can explain its decisions, adapt to novel situations, and operate reliably in high-stakes environments. Understanding the Deep Knowledge Function is essential for grasping the next frontier of artificial intelligence.

The term “Deep Knowledge Function” itself signifies a dual-layered capability. The “Deep” component refers to the hierarchical feature extraction prowess of deep learning models, which can discern intricate patterns from vast amounts of raw, unstructured data like text, images, and sensor readings. The “Knowledge Function” aspect points to the system’s ability to manipulate this extracted information using logical rules, ontological relationships, and causal models. Unlike a standard predictive model that might identify a tumor in a medical scan, a system governed by a Deep Knowledge Function could also infer the tumor’s likely type, suggest potential treatment options based on medical literature and patient history, and explain the chain of reasoning behind its recommendation. This transforms AI from a black-box predictor into a transparent reasoning partner.

The development of the Deep Knowledge Function marks a significant shift from data-centric AI to knowledge-centric AI. Traditional machine learning models are heavily reliant on statistical correlations found in their training datasets. In contrast, a system leveraging a Deep Knowledge Function seeks to build an internal model of how the world works. It can handle ambiguity, incorporate common-sense knowledge, and perform counterfactual reasoning—asking “what if” questions to explore different scenarios. This makes the Deep Knowledge Function particularly valuable for strategic planning, scientific discovery, and complex system management, where decisions are not based on a single datum but on a rich tapestry of interconnected information. The pursuit of a robust Deep Knowledge Function is, therefore, the pursuit of artificial general intelligence.

The Architectural Foundations of Deep Knowledge Systems

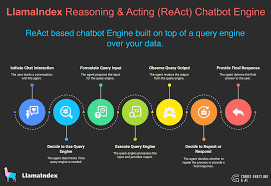

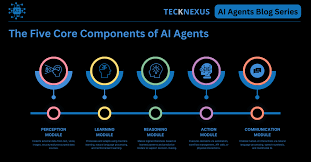

The architecture enabling a Deep Knowledge Function is inherently hybrid, combining the strengths of various AI subfields into a cohesive and powerful whole. At its core, this architecture typically features a deep learning backbone, often composed of transformers or sophisticated recurrent networks, which is responsible for processing perceptual data and converting it into a structured, symbolic representation. This is the system’s interface with the messy, unstructured real world. The outputs from this component—such as identified objects, parsed sentences, or recognized entities—are then passed to a reasoning engine, which operates on a knowledge base. This layered architecture is what allows the Deep Knowledge Function to be both perceptive and logical, bridging the gap between raw data and actionable insight.

A critical element within this architecture is the knowledge graph, which serves as the structured memory for the Deep Knowledge Function. A knowledge graph is a network of entities (people, places, concepts, events) and their relationships. For instance, a Deep Knowledge Function in healthcare would have a knowledge graph connecting diseases, symptoms, genes, drugs, and side effects. When the system processes a new patient’s electronic health record, the deep learning component extracts relevant facts (e.g., “patient has high fever and rash”), and the reasoning engine queries the knowledge graph to find diseases that are linked to these symptoms, while also checking for contraindications with the patient’s current medications. This seamless interaction between perception and symbolic reasoning is the hallmark of an effective Deep Knowledge Function.

Furthermore, the architecture incorporates mechanisms for continuous learning and knowledge integration. A static Deep Knowledge Function would quickly become obsolete. Therefore, these systems are designed with feedback loops that allow them to update their internal knowledge bases and refine their reasoning models based on new data and outcomes. This might involve reinforcement learning, where the system is rewarded for successful reasoning chains, or automated knowledge curation from scientific literature. The ultimate goal of this architectural design is to create a Deep Knowledge Function that is not just a frozen repository of information but a dynamic, evolving intellect capable of growing its expertise over time, much like a human domain expert would through years of practice and study.

Distinguishing Deep Knowledge from Shallow AI Models

It is crucial to distinguish the capabilities of a Deep Knowledge Function from those of what can be termed “shallow” AI models. Shallow models, which include many standard machine learning algorithms, excel at finding correlations within specific, well-defined datasets. A shallow model might be highly accurate at predicting customer churn based on historical data, but it cannot explain why a customer might leave, nor can it devise a strategic intervention to prevent it. Its understanding is surface-level and brittle; if the data distribution changes significantly, its performance plummets. The Deep Knowledge Function, by contrast, aims for a causal, mechanistic understanding that is robust and transferable across related domains.

The difference becomes stark when faced with novelty and the need for abstraction. A shallow image classifier trained on millions of pictures of cats and dogs may fail miserably when presented with a cartoon drawing of a cat, as it lacks a conceptual model of “cat-ness.” A system with a Deep Knowledge Function, however, would have encoded knowledge about the essential features of a cat (e.g., whiskers, tail, typical body shape) and could reason that the cartoon, while stylized, still embodies these features. This ability to abstract and generalize from limited examples is a key differentiator. The Deep Knowledge Function enables systems to perform well in low-data regimes by leveraging their pre-existing knowledge base, whereas shallow models are notoriously data-hungry.

Another critical distinction lies in transparency and explainability. The decision-making process of a shallow deep learning model is often an inscrutable web of millions of parameters, making it a “black box.” This is unacceptable in high-consequence fields like medicine or finance. A Deep Knowledge Function is designed to be interpretable. Because it operates on a foundation of symbolic logic and structured knowledge, it can retrace its reasoning steps and provide a clear, human-understandable justification for its output. It can state, “I recommend this drug because the patient’s genomic profile indicates sensitivity, and it has fewer interactions with their other medications, as per the clinical guidelines.” This capacity for reasoned explanation is not an add-on but a fundamental property of the Deep Knowledge Function itself.

Core Mechanisms: Reasoning and Inference in Deep Knowledge

The true power of a Deep Knowledge Function is unleashed through its sophisticated reasoning and inference mechanisms. These mechanisms allow the system to go beyond the information explicitly given, to draw new conclusions, and to solve problems dynamically. One of the primary forms of reasoning employed is logical inference, often using frameworks like first-order logic or probabilistic logic. Given a set of rules (e.g., “If a metal is exposed to oxygen and moisture, it will corrode”) and facts (e.g., “This iron gate is exposed to rain”), the Deep Knowledge Function can deduce new facts (“The iron gate will corrode”). This deductive reasoning is foundational for tasks like theorem proving and fault diagnosis in complex systems.

Beyond deduction, a robust Deep Knowledge Function must also master abductive and causal reasoning. Abductive reasoning, or inference to the best explanation, is crucial for diagnostic tasks. For example, a patient presents with a set of symptoms. The Deep Knowledge Function can abduce the most likely disease from its knowledge base that would explain all the observed symptoms, even weighing probabilities and considering rare conditions. Causal reasoning is an even more advanced capability, allowing the system to understand cause-and-effect relationships. This enables the Deep Knowledge Function to answer interventional questions (“What would happen if we administer this drug?”) and counterfactual questions (“Would the patient have recovered if they had been given the drug earlier?”). This causal understanding is what separates true intelligence from mere curve-fitting.

These reasoning processes are not sequential but are often interleaved and performed over a massive, interconnected knowledge graph. The Deep Knowledge Function might use path-finding algorithms to discover hidden relationships between entities, or employ graph neural networks to learn representations that are informed by the graph structure. For instance, in drug discovery, the system might reason that if a protein A is inhibited by drug B, and protein A regulates gene C, which is associated with disease D, then drug B could be a candidate for treating disease D. This multi-hop, relational reasoning across a vast biological knowledge graph is a quintessential application of a Deep Knowledge Function, demonstrating its ability to synthesize information from disparate sources to generate novel, actionable hypotheses.

Applications in Complex Decision-Mupport Systems

One of the most impactful applications of the Deep Knowledge Function is in the domain of complex decision-support systems. In fields such as healthcare, finance, and logistics, professionals are often overwhelmed by the volume and complexity of information they must synthesize to make critical choices. A clinical decision-support system powered by a Deep Knowledge Function can integrate a patient’s medical history, real-time vital signs, genomic data, and the latest clinical research to assist a doctor in diagnosing a rare disease or formulating a personalized treatment plan. The system doesn’t just provide an answer; it offers a ranked list of possibilities, each backed by evidence and a clear chain of reasoning, thereby augmenting the physician’s expertise rather than replacing it.

In the financial sector, the Deep Knowledge Function is revolutionizing risk management and strategic investment. Traditional quantitative models are based on historical market data and can be blind to “black swan” events or shifting regulatory landscapes. A financial AI equipped with a Deep Knowledge Function can continuously analyze not only market feeds but also news articles, earnings reports, and geopolitical events. It can reason about the potential impact of a new trade policy on a specific supply chain and, consequently, on a portfolio of stocks. This ability to understand second- and third-order effects, by chaining together knowledge about corporate ownership, global logistics, and political risk, provides a profound strategic advantage that is impossible for simpler algorithms to achieve.

Furthermore, the Deep Knowledge Function is indispensable for managing large-scale, interconnected infrastructure systems like smart grids or urban traffic networks. A smart grid management system must balance power generation, distribution, and consumption in real-time. A shallow AI might optimize for immediate cost, but a system with a Deep Knowledge Function can reason about long-term sustainability, equipment wear-and-tear, weather predictions, and even social factors like public events that affect energy demand. It can create robust plans that are resilient to unexpected failures, simulating various scenarios before committing to an action. This holistic, long-horizon planning capability, grounded in a deep model of the physical and social world, is a direct result of implementing a sophisticated Deep Knowledge Function at the core of these mission-critical systems.

The Role of Deep Knowledge in Autonomous Systems and Robotics

For autonomous systems like self-driving cars and advanced robotics, the Deep Knowledge Function is the key to achieving true situational awareness and robust operation in unstructured environments. While perception algorithms can identify objects like cars, pedestrians, and traffic signs, it is the Deep Knowledge Function that endows the system with an understanding of traffic laws, social norms, and the intentions of other agents. It allows the vehicle to reason that a ball rolling into the street might be followed by a child, or that a cyclist’s hand signal indicates an imminent turn. This common-sense reasoning is what separates a competent autonomous agent from a mere sensor-fusion and control system.

In industrial robotics, the application of Deep Knowledge Function enables flexible manufacturing. A traditional robot on an assembly line is programmed for a single, repetitive task. A robot imbued with a Deep Knowledge Function, however, can understand the assembly process for different products. It can reason about the geometric constraints of parts, the required tools for each step, and the sequence of operations. If it encounters an unexpected situation, such as a missing component or a part placed slightly out of position, it can dynamically replan its actions to complete the task. This ability to represent goals, understand physical constraints, and generate plans on the fly is a direct manifestation of a powerful Deep Knowledge Function operating in the physical world.

The challenges in this domain are immense, primarily due to the need for real-time performance and absolute safety. The Deep Knowledge Function for robotics must be highly efficient, performing its complex reasoning within strict time constraints. It also requires a rich knowledge base of physical commonsense—understanding concepts like gravity, friction, and material properties—that humans take for granted. Research in cognitive robotics focuses on building these detailed world models and integrating them with planning and control algorithms. The success of this endeavor, creating robots that can safely and intelligently collaborate with humans in dynamic settings like homes and hospitals, hinges entirely on the maturation and reliability of their underlying Deep Knowledge Function.

Challenges in Developing and Implementing Deep Knowledge Functions

Despite its immense potential, the development and implementation of a robust Deep Knowledge Function present formidable challenges. The first and perhaps most significant hurdle is knowledge acquisition and representation. Curating the vast, high-quality knowledge bases required for deep reasoning is an enormous manual effort. While automated knowledge extraction from text using natural language processing has advanced, it still struggles with ambiguity, nuance, and integrating information from conflicting sources. Deciding how to represent this knowledge—choosing the right ontologies and logical formalisms—is a complex task that requires deep domain expertise. Building the foundational knowledge for a Deep Knowledge Function is as much an art as it is a science.

Another major challenge is the integration of the neural (sub-symbolic) and symbolic components. Getting the deep learning perception module to output symbols that are perfectly aligned with the reasoning engine’s expectations is non-trivial. Errors in perception can propagate into the symbolic layer and lead to catastrophic reasoning failures. This is known as the “symbol grounding problem.” Furthermore, the entire system must be trained end-to-end to some degree, but combining the differentiable training of neural networks with the discrete, logical nature of symbolic reasoning is a cutting-edge research problem. Developing stable and efficient training paradigms for these hybrid architectures is essential for scaling the Deep Knowledge Function to more complex domains.

Finally, there are challenges related to computational complexity and scalability. Logical reasoning over large knowledge graphs can be computationally expensive, especially when dealing with uncertainty and probabilistic inference. As the knowledge base grows, the time required for the Deep Knowledge Function to arrive at a conclusion can become prohibitive for real-time applications. Researchers are tackling this through methods like knowledge graph embedding, which represents entities in a continuous vector space, allowing for faster, approximate reasoning. Ensuring that the Deep Knowledge Function can scale to the size of the internet while remaining efficient and responsive is one of the grand challenges in the field of artificial intelligence, crucial for realizing the full vision of machines that can think and reason like humans.

The Future Trajectory and Ethical Implications of Deep Knowledge AI

The future trajectory of the Deep Knowledge Function points towards even greater integration, autonomy, and generality. We are moving towards systems that can not only reason with pre-existing knowledge but also actively discover new knowledge through interaction with the world and through automated scientific experimentation. Imagine a Deep Knowledge Function that can read scientific papers, formulate hypotheses, design and run simulated experiments, analyze the results, and then update its own knowledge base—a closed-loop AI scientist. This would dramatically accelerate the pace of discovery in fields from materials science to medicine, pushing the Deep Knowledge Function from a tool for applying knowledge to a tool for creating it.

As these systems become more capable, they will inevitably play a larger role in strategic decision-making at corporate and governmental levels. This raises profound ethical implications that must be addressed proactively. A Deep Knowledge Function is only as unbiased as the knowledge it is trained on. If its knowledge base contains societal biases or reflects flawed human reasoning, it will perpetuate and potentially amplify them at scale. Ensuring fairness, accountability, and transparency in these powerful reasoning systems is paramount. The “right to an explanation” for decisions made by AI will require the Deep Knowledge Function to have unparalleled capabilities for generating interpretable and auditable reasoning trails.

Ultimately, the goal is not to create an omniscient oracle but to develop a collaborative intelligence that augments human intellect. The most powerful applications of the Deep Knowledge Function will be as an assistant to human experts—a doctor’s aide, a scientist’s partner, a strategist’s simulator. The future will likely see a symbiotic relationship where humans provide the overarching goals, ethical guidance, and creative leaps, while the Deep Knowledge Function handles the brute-force reasoning over complex information spaces, ensuring consistency, uncovering hidden insights, and managing complexity. Navigating this future successfully will require a concerted effort from technologists, ethicists, and policymakers to ensure that the power of the Deep Knowledge Function is harnessed for the benefit of all humanity.

This Article Was Written by Pausslot